Supabase Setup

Create a Supabase project and load the schema — 5 minutes.

Supabase is your database + auth + file storage. One signup, one setup, and DirectoryKit is ready to accept signups and submissions.

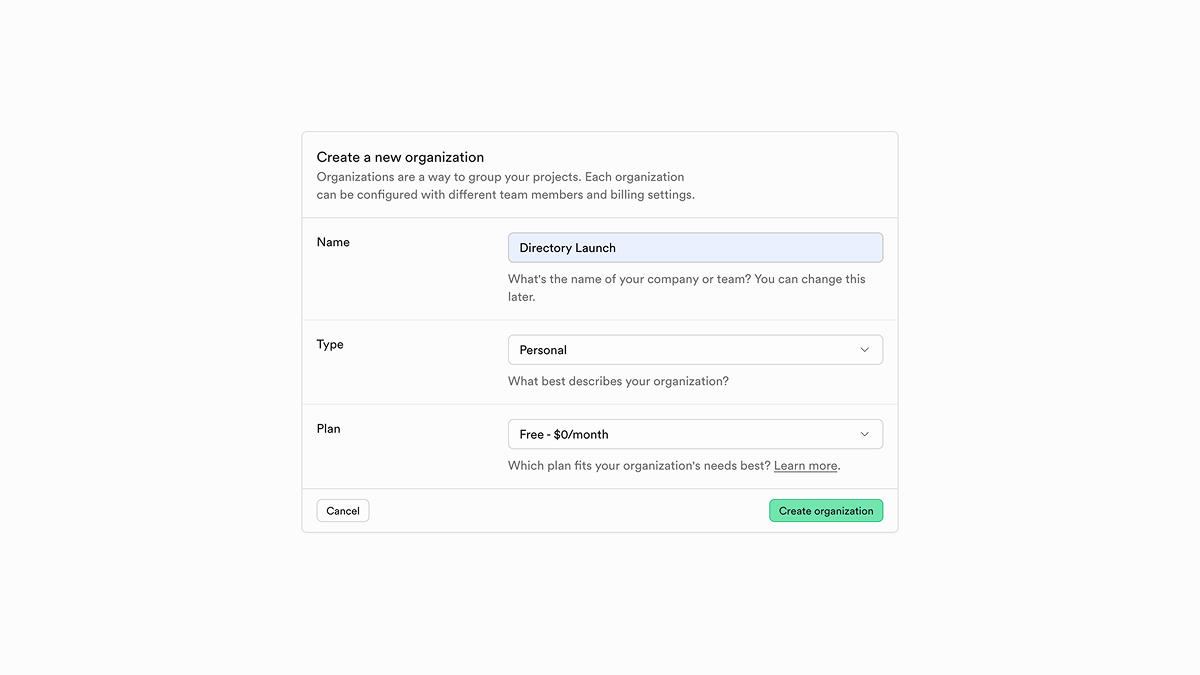

1. Create a new project

supabase.com → New Project.

- Name: anything — this is just for your dashboard.

- Database Password: generate one in your password manager and save it there. You'll rarely need it after this.

- Region: pick the data center closest to most of your users.

- Pricing plan: the Free tier is enough to launch.

Wait ~2 minutes for the database to provision.

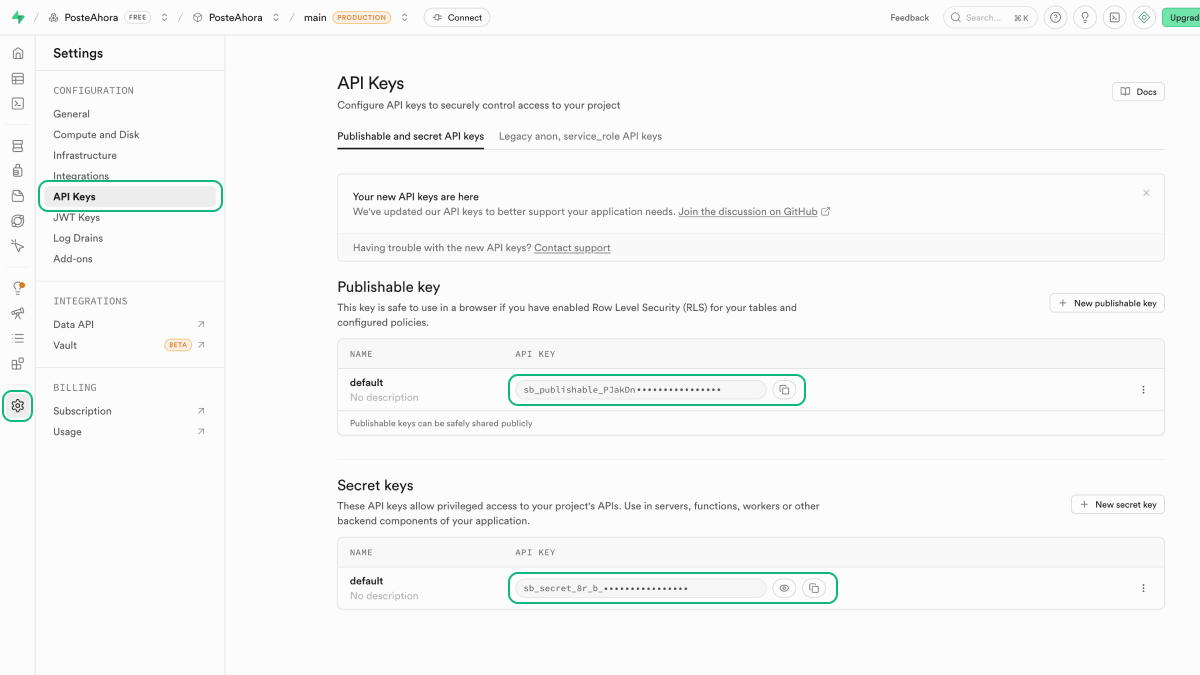

2. Grab your keys

Project URL lives under Project Settings → API. The keys live under Project Settings → API Keys.

| Value | Env var | Format |

|---|---|---|

| Project URL | NEXT_PUBLIC_SUPABASE_URL | https://<ref>.supabase.co |

| Publishable key | NEXT_PUBLIC_SUPABASE_PUBLISHABLE_KEY | sb_publishable_… — browser-safe, respects RLS |

| Secret key | SUPABASE_SECRET_KEY | sb_secret_… — server-only, bypasses RLS |

Paste the values into .env.local at the project root:

NEXT_PUBLIC_SUPABASE_URL=https://xxx.supabase.co

NEXT_PUBLIC_SUPABASE_PUBLISHABLE_KEY=sb_publishable_...

SUPABASE_SECRET_KEY=sb_secret_...If your Supabase project pre-dates the new key system and only shows you anon (eyJ...) and service_role (eyJ...) keys, you can keep using them — lib/supabase/env.ts automatically falls back to NEXT_PUBLIC_SUPABASE_ANON_KEY and SUPABASE_SERVICE_ROLE_KEY when the new variables aren't set. The migration script does the same fallback. Supabase has announced that legacy keys will be removed in late 2026, so new projects should prefer the new names.

Anyone holding SUPABASE_SECRET_KEY (or legacy SUPABASE_SERVICE_ROLE_KEY) can read and write every row in your database, ignoring RLS. Keep it server-side only. Never commit it. Never put it in a NEXT_PUBLIC_* variable or a client component.

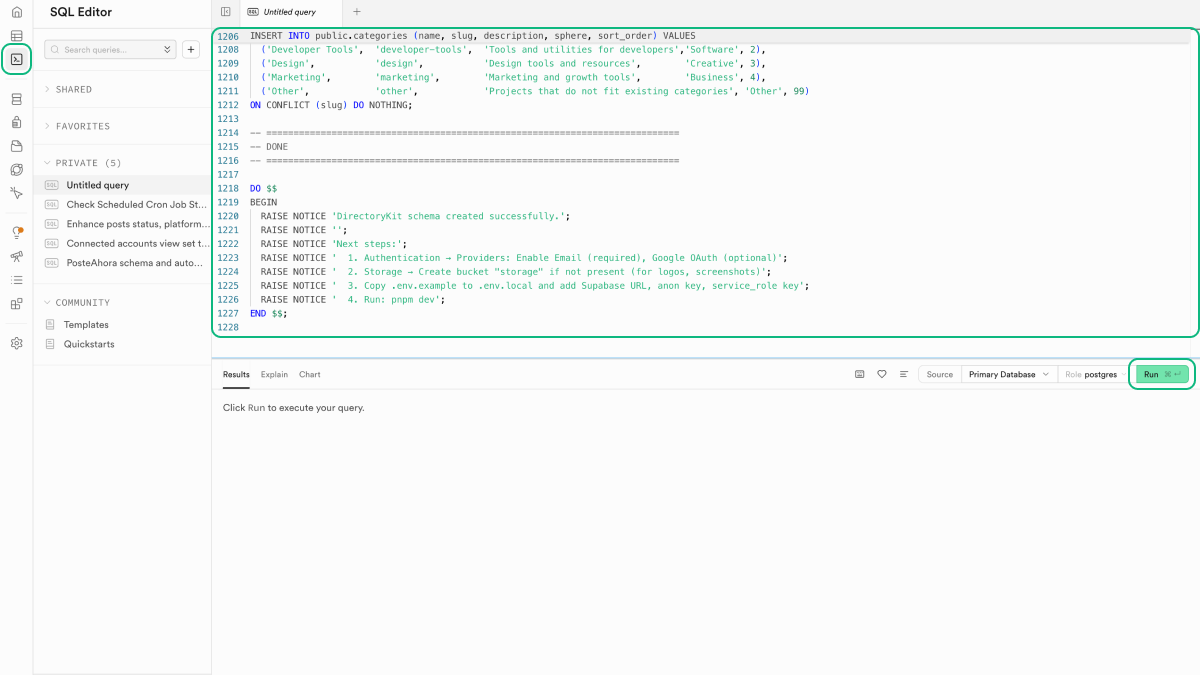

3. Run the schema

The entire database structure lives in a single file at supabase/schema.sql. Running it creates all tables, indexes, triggers, and RLS policies.

Option A — Supabase SQL Editor (easiest)

- In Supabase → SQL Editor → New query

- Open

supabase/schema.sqlfrom the project and copy its full contents - Paste into the editor → click Run

Option B — migration script

From the project root:

pnpm db:migrateThis runs scripts/migrate-to-supabase.js, which loads supabase/schema.sql and applies it to your project via the Supabase Management RPC. The script reads SUPABASE_SECRET_KEY and falls back to SUPABASE_SERVICE_ROLE_KEY if you're still on legacy keys.

The schema is safe to run multiple times. It uses IF NOT EXISTS clauses — running it again is a no-op when the tables, indexes, triggers, and policies already exist.

4. Verify

In Supabase → Table Editor — you should see 17 tables: users, apps, categories, payments, comments, ratings, bookmarks, analytics, newsletter, competitions, promotions, partners, changelog, email_notifications, external_webhooks, link_type_changes, site_settings.

From your project:

pnpm db:testShould print ✓ Connected to Supabase.

5. Set up S3 storage (for file uploads)

DirectoryKit uploads logos and screenshots to S3-compatible storage. Supabase Storage provides an S3 endpoint — no separate AWS account needed.

- Supabase → Storage → Buckets → New bucket → name it

storage→ public - Storage → Configuration → S3 (also reachable as Project Settings → Storage) → Enable S3 connection

- Generate credentials — copy the endpoint, access key, and secret access key

Add to .env.local:

SUPABASE_S3_ENDPOINT=https://xxx.supabase.co/storage/v1/s3

SUPABASE_S3_REGION=us-east-1

SUPABASE_S3_ACCESS_KEY_ID=...

SUPABASE_S3_SECRET_ACCESS_KEY=...

SUPABASE_S3_BUCKET=storage6. Enable Google OAuth (optional)

See the step-by-step in Authentication.

7. Regenerate TypeScript types (advanced)

If you edit the schema directly in Supabase (adding columns, etc.), regenerate the type definitions locally:

SUPABASE_PROJECT_ID=your-project-id pnpm supabase:typesThe script writes to types/supabase.ts. The file isn't checked in by default — regenerate it whenever the schema changes and the new types will pick up automatically.

See also

- Schema Overview — what each table stores

- Database Layer API — how to query from code

- Authentication — Supabase Auth helpers